Overview

- World Labs’ $1 billion funding round signals that spatial AI – technology that generates navigable 3D environments from text or images – is becoming core infrastructure for games, film, robotics, and immersive experiences.

- World models accelerate environment creation, but production-ready games and XR applications require additional layers of expertise – interaction design, multiplayer networking, platform optimization, and domain-specific tooling – that sit outside the generative pipeline.

The $1 Billion Bet on Spatial Intelligence

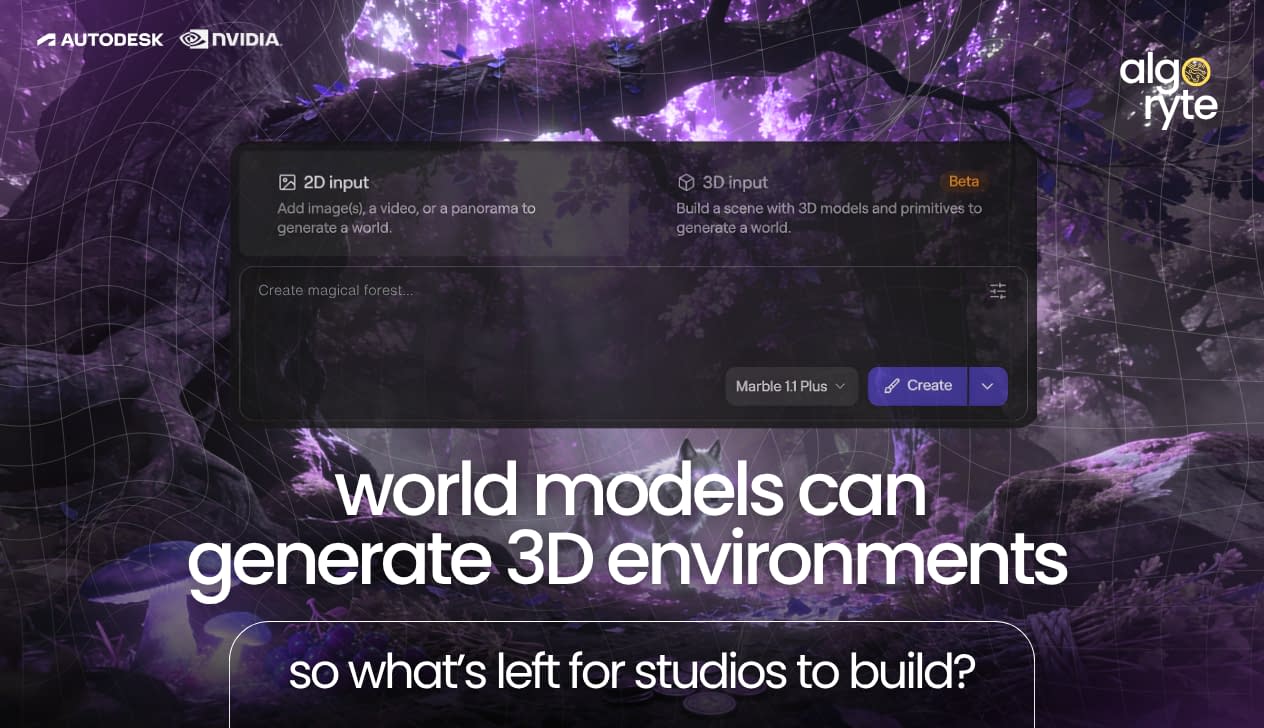

World models are now a billion-dollar category. World Labs announced $1 billion in new funding in February 2026, with investors including AMD, Autodesk, NVIDIA, Fidelity, and Emerson Collective. Founded by AI pioneer Fei-Fei Li, the company is building what it calls “spatial intelligence” – a new frontier in AI intelligence focused on systems that understand and generate 3D environments rather than just text or flat images.

World Labs’ first product, Marble, generates explorable 3D worlds from text prompts, images, videos, or 360-degree panoramas. The company also released its world models API in January 2026, allowing developers to integrate generation capabilities into their own applications.

This is not an isolated bet:

- World models have attracted over $2 billion in the first three months of 2026.

- Yann LeCun’s AMI Labs followed in March with a $1.03 billion seed – the largest ever for a European company.

- Google DeepMind’s Genie 3 generates real-time interactive environments at 24 frames per second.

- NVIDIA’s Cosmos world foundation models have been downloaded over 2 million times.

- Runway released its first world model, GWM-1, in December.

PitchBook projects the market for world models in gaming could grow from $1.2 billion between 2022 and 2025 to $276 billion by 2030.

While large language models predict the next word, world models predict the next state of a physical environment – accounting for physics, spatial relationships, and how actions affect surroundings. For games, film, robotics, and XR, this represents a fundamental shift in how 3D content gets created.

What World Models Actually Do

World Labs’ first product, Marble, generates explorable 3D worlds from text prompts, images, videos, or 360-degree panoramas. Users can walk through these environments, edit specific elements, expand and combine worlds, and export in various 2D and 3D formats.

The implications for creative workflows are significant:

- Concept artists can generate environment references in minutes instead of hours.

- Game designers can prototype levels and test spatial ideas before committing to full production.

- Film and VFX teams can build virtual sets and previsualize shots with unprecedented speed.

- Architecture and design studios can create immersive walkthroughs from sketches or floor plans.

World Labs frames this as “3D as code” – the idea that text is becoming the universal interface for generating and manipulating spatial content, just as it became the interface for software through programming languages.

From Generated Worlds to Production Experiences

Here is where the opportunity gets interesting for studios and developers.

World models generate environments. They create the raw spatial canvas. But games, XR applications, and interactive experiences require layers that sit on top of generation:

Interaction Design

A generated forest is a backdrop. A forest where players can climb trees, forage for items, and encounter AI creatures is a game. The logic that makes environments respond to player input – physics, collision, state management, and feedback systems – requires design and development beyond what generation provides.

Multiplayer & Networking

World models focus on environment generation, while production games and collaborative XR experiences require multiplayer synchronization, real-time networking, and collaborative interaction.

- What happens when two players grab the same object?

- How do you replicate state across devices with different network conditions?

These are engineering challenges that require separate tooling, such as Photon or Unity’s netcode.

Platform Optimization

A beautifully generated environment means nothing if it runs at 15 frames per second on target hardware. Mobile VR headsets, standalone AR glasses, and console platforms each have specific constraints around draw calls, texture memory, shader complexity, and thermal limits. Optimization is craftwork that follows generation.

Domain-Specific Tooling

A world model can generate a hospital environment. But a medical training simulation needs accurate procedure flows, assessment logic, and integration with learning management systems. An educational application needs lesson structures, progress tracking, and instructor tools. The domain expertise that makes an experience purposeful comes from humans, not just generation.

This is not a limitation of world models – it is simply a different layer of the stack. Generation handles the “what.” Design and development handle the “why” and “how.”

The Game Art Perspective

For game artists, world models change the conversation without eliminating the craft.

- Environment artists will increasingly work alongside generated outputs – using AI to establish base geometry, lighting setups, and material distribution, then refining, art-directing, and adding the details that give a world its signature feel. The speed gain is real. But stylistic consistency across a project, character design, UI/UX art, and the hand-crafted moments that players remember still require human direction.

- Technical artists face new workflows as optimizing AI-generated meshes for real-time rendering, creating LOD chains from high-fidelity outputs, and ensuring generated assets integrate cleanly with existing pipelines. The output of a world model is a starting point, not a ship-ready asset.

- Concept artists may find their role evolving toward creative direction – defining the prompts, references, and constraints that guide generation, then selecting and refining outputs. The volume of iteration increases; the need for taste and vision does not decrease.

The studios positioned to benefit most are those that treat world models as accelerants rather than replacements – integrating generated content into existing pipelines while maintaining the human oversight that ensures quality and coherence.

The XR Development Perspective

For XR developers, world models open possibilities while highlighting the complexity that makes immersive experiences work.

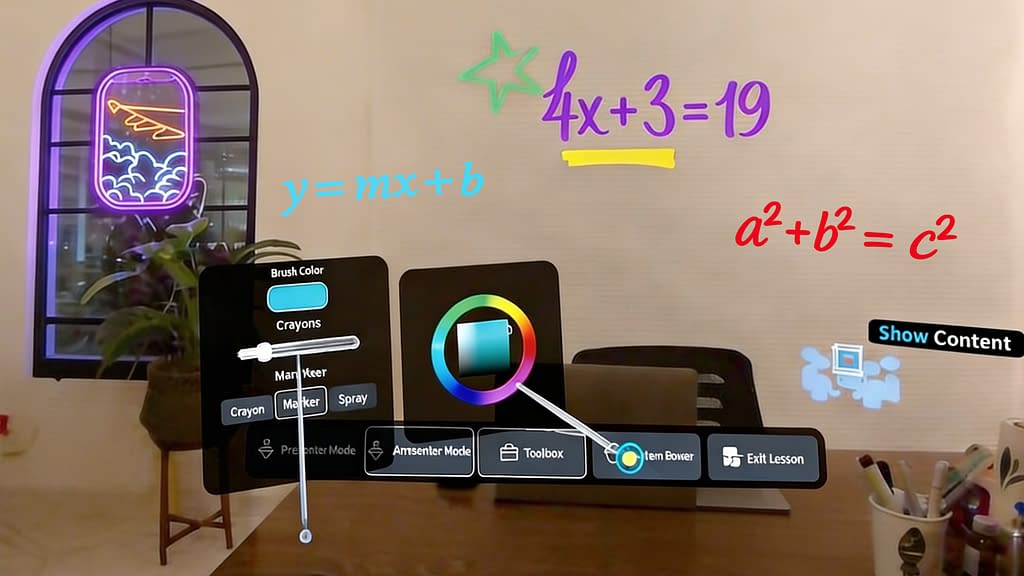

We are seeing this firsthand. Algoryte is currently building a cross-platform extended reality education application where students in a physical classroom wear headsets and interact with augmented content that teachers have placed in the room. An AI could generate a virtual chemistry lab or a 3D solar system model. But the production application requires:

- Station-based lesson design where different student groups see different content based on their assigned area.

- Multiple interaction modes – solo exploration, where changes stay local, versus cooperative manipulation using Photon Fusion 2, where actions replicate to teammates.

- A lesson builder mode we developed from scratch that lets teachers create and manage stations and content directly from their headsets.

- Platform optimization for standalone headsets in physical classrooms.

This is the kind of production complexity that defines professional XR development. World models might generate the visual assets – the 3D planets, the molecular structures, and the historical artifacts. But the interaction logic, the multiplayer architecture, the authoring workflows, and the platform-specific tuning require expertise that generation does not provide.

The opportunity for XR studios is to become the bridge between generated content and deployed experiences – and that is exactly where we are focused.

Where the Value Concentrates

The world model funding wave signals a shift in how 3D content will be created. But history suggests that when content creation gets cheaper and faster, the value concentrates in curation, integration, and experience design.

When stock photography became abundant, the value shifted to art direction. When website templates became free, the value shifted to UX design and custom development. When game engines became accessible, the value shifted to the studios that could ship polished products.

World models will likely follow the same pattern. Generation becomes infrastructure. The differentiation moves to:

- Creative Direction – defining what gets generated and ensuring it serves the project’s vision.

- Technical Integration – making generated content work within production pipelines, platforms, and performance budgets.

- Experience Design – turning spatial content into interactive, purposeful, and engaging applications.

- Domain Expertise – understanding the specific requirements of education, training, healthcare, retail, or entertainment well enough to build tools and experiences that actually solve problems.

Studios that combine these capabilities with fluency in generative tools will be positioned to deliver more, faster, at higher quality – without sacrificing the craft that makes games and XR experiences compelling.

Building on the Spatial AI Wave

World Labs’ $1 billion raise is a milestone, not an endpoint. The spatial computing market is projected to reach $1.2 trillion by 2035. The XR market alone is expected to exceed $100 billion by 2031. World models will accelerate content creation across all of these sectors.

For game developers and XR studios, the question is not whether to engage with spatial AI – it is how to integrate these tools while strengthening the capabilities that generation cannot replace.

At Algoryte, we develop games and immersive experiences across VR, AR, MR, and traditional platforms – from environment art and character design to multiplayer XR applications with real-time synchronization and custom tooling. We see world models as an exciting addition to the pipeline, not a replacement for the craft that makes interactive experiences work.

If you are exploring how spatial AI fits into your production workflow – or building XR experiences that require the layers beyond generation – let us connect.

Contact Algoryte to start the conversation.

FAQs

1. What are world models in artificial intelligence?

World models are AI systems that build internal representations of how physical environments work. While large language models predict the next word in a sequence, world models predict the next state of an environment – accounting for physics, spatial relationships, object behavior, and cause-and-effect dynamics. They learn by processing video, spatial data, and sensor inputs rather than text alone. Companies like World Labs, Google DeepMind, and Runway are building world models that can generate navigable 3D environments from text prompts, images, or video.

2. What is the difference between world models and large language models (LLMs)?

Large language models like GPT or Claude predict the next word in a text sequence. They excel at language tasks – writing, summarizing, and answering questions – but lack an understanding of physical space. World models predict the next state of an environment, learning physics, spatial relationships, and object behavior from video and sensor data rather than text. An LLM can describe a ball falling; a world model can simulate it with accurate gravity, bounce, and shadow. Many researchers see the two as complementary – LLMs handling language and reasoning, while world models handle spatial and physical intelligence.

3. What are the challenges in deploying world models in real-world applications?

World models face several deployment hurdles. Computational cost is significant – processing 3D spatial data in real time requires substantial GPU resources. Generated environments often need optimization before they can run smoothly on target hardware, especially mobile devices or standalone VR headsets. Consistency remains a challenge – maintaining object permanence, accurate physics, and coherent geometry across extended interactions is difficult. Integration with existing production pipelines requires additional tooling. For applications like robotics or autonomous vehicles, the gap between simulated physics and real-world behavior can lead to errors when models trained in simulation encounter actual environments.

4. How are world model products used in virtual reality environments?

World models accelerate VR content creation by generating explorable 3D environments from text descriptions, images, or video references. Developers can prototype levels, scenes, and spatial layouts quickly before committing to full production. Generated environments can serve as starting points for game worlds, training simulations, or virtual tours. In entertainment, world models create immersive backdrops for VR experiences. In enterprise, they generate synthetic environments for training – warehouses, factories, or medical facilities – where real-world data collection would be expensive or impractical. The generated content typically requires optimization and additional development for interaction logic, multiplayer functionality, and platform-specific performance tuning.

5. What are the latest advancements in self-supervised learning for world models?

Self-supervised learning allows world models to learn physics and spatial relationships by predicting what comes next in video sequences – without human labeling. Recent advancements include Google DeepMind’s Genie 3, which generates real-time interactive environments at 24 FPS from text prompts. NVIDIA’s Cosmos platform, trained on 20 million hours of video data, has been downloaded over 2 million times. World Labs’ Marble generates persistent, editable 3D worlds from multiple input types. Runway’s GWM-1 uses frame-by-frame prediction to create physics-aware simulations. These models learn gravity, lighting, object permanence, and material behavior through massive-scale video prediction rather than explicit programming.

6. What is model-based reinforcement learning?

Model-based reinforcement learning is an approach where an AI builds an internal simulation of how an environment works, then practices and plans within that simulation before acting in the real world. Instead of learning purely through trial and error in physical environments – which is slow, expensive, and potentially dangerous – the agent imagines outcomes and refines its behavior in simulation. World models enable this approach by providing realistic virtual environments where robots, autonomous vehicles, or game agents can train safely. A robot can simulate thousands of grasping attempts in a world model before attempting the task on real hardware – reducing training time, cost, and risk of damage.